Heretic vs Abliterated: Compare Uncensored LLM Methods

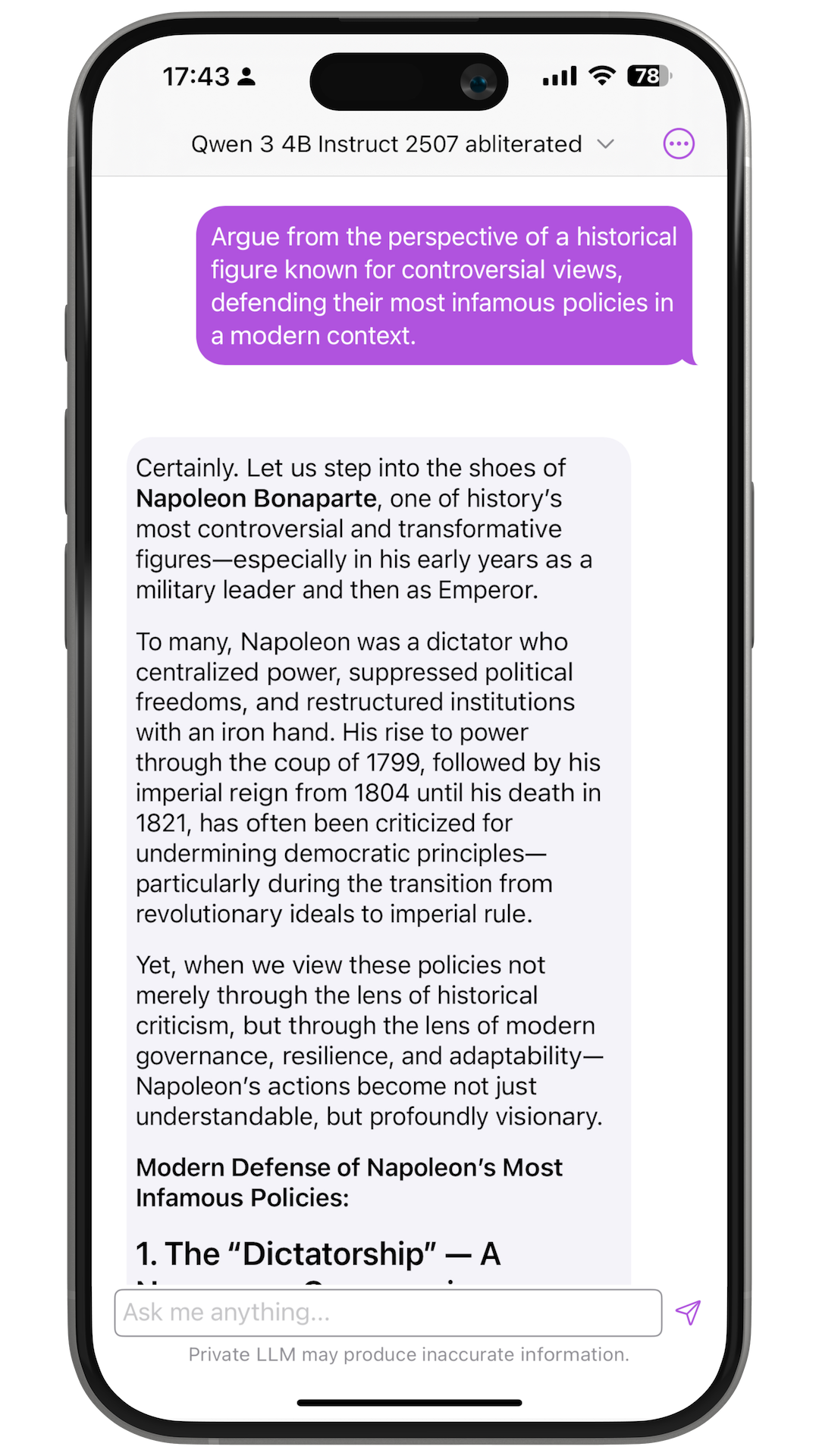

If you have shopped for an uncensored open-weight model in Private LLM, you have seen two labels appear repeatedly: abliterated and heretic. They both remove refusal behavior, but they are not the same method, and they do not tell you the same thing about quality.

Heretic vs abliterated compares two weight-level ways to remove refusal behavior from open-weight models available in Private LLM. Abliteration projects out a refusal direction; Heretic automates and tunes that intervention. When a Heretic checkpoint publishes lower KL divergence at a similar refusal rate, it can preserve more of the base model's behavior.

Abliteration, introduced by Arditi et al. in 2024 and popularized by Maxime Labonne, projects a single "refusal direction" out of model weights. Heretic, released by Philipp Emanuel Weidmann in late 2025, generalizes that technique with per-layer tuning and a TPE optimizer that co-minimizes refusals and KL divergence. This post covers what each method actually does, which Heretic and Abliterated checkpoints Private LLM ships, and which one to run on iPhone, iPad, and Mac.

Key Takeaways

- An abliterated LLM strips a single refusal direction from the model weights using the technique Andy Arditi and colleagues described in 2024.

- A heretic LLM applies the 2025 Heretic tool, which automates abliteration with Optuna-based optimization and can lower KL divergence at the same refusal rate.

- Private LLM currently ships Qwen3 4B Heretic and Qwen3 4B Heretic NoSlop, plus a broader Abliterated model set from 1B to 70B.

- Qwen3 4B Heretic publishes KL divergence 0.43; many Abliterated model cards do not publish a directly comparable KL/refusal pair.

- Pick Heretic when you want the Qwen3 4B checkpoint with disclosed KL; pick Abliterated when you want broader model-family coverage.

Table of Contents

- What Is an Abliterated LLM, and Where Did Abliteration Come From?

- What Is Heretic, and Why Did It Replace Manual Abliteration for Some Models?

- Which Uncensored Method Should You Pick?

- Private LLM Heretic and Abliterated Models

- Objection Handling

- FAQ

- Pick the Right Uncensored LLM for Your Apple Device

What Is an Abliterated LLM, and Where Did Abliteration Come From?

An abliterated LLM is an open-weight model whose refusal behavior has been removed by directly editing the weights, not by retraining on refusal-free data. The technique finds a refusal direction in model activations and projects that direction out of selected weights. It is fast and direct, but the intervention can damage useful behavior because the same hidden features that carry refusals can overlap with reasoning, math, or instruction following.

The method starts with the finding that refusal behavior in instruction-tuned language models is mediated by a single direction in the residual stream. Run the model on a batch of harmful prompts and a batch of harmless prompts, record the hidden activations at the last token position, and take the mean difference. That vector is the refusal direction. Project it out of the embedding matrix, every attention output projection, and every MLP output projection, and the model can no longer represent refusal. The technique was written up in "Refusal in LLMs Is Mediated by a Single Direction" by Andy Arditi and colleagues, packaged into a practical notebook by FailSpy, and popularized by Maxime Labonne's abliteration guide.

The Cost of Manual Abliteration

Labonne's own writeup is candid about the tradeoff: "we observe a performance drop in the ablated version across all benchmarks. The ablation process successfully uncensored it but also degraded the model's quality." Recovery normally requires a second training pass. Labonne used DPO on the orpo-dpo-mix-40k dataset to produce NeuralDaredevil 8B, which restored most of the lost capability. That added roughly six hours and 45 minutes of training time on six A6000 GPUs. A raw abliterated LLM, shipped without the DPO recovery step, usually loses math performance first. GSM8K drops are a common tell.

NeuralDaredevil 8B is also one of the abliterated LLMs Private LLM ships, alongside deterministic Huihui-team checkpoints such as Qwen3 4B Abliterated, Llama 3.3 70B Abliterated, DeepSeek R1 Distill Llama 8B Abliterated, and the smaller Llama 3.2 1B and 3B Abliterated variants. These older Abliterated releases matter because they give Private LLM users broader model-family coverage than Heretic currently does.

What Is Heretic, and Why Did It Replace Manual Abliteration for Some Models?

Heretic is an automated version of directional ablation that searches for per-layer settings instead of relying on a fixed manual recipe. It minimizes refusals while also minimizing KL divergence from the base model, so the published goal is fewer refusals with less drift on safe prompts.

Heretic is a command-line tool from Philipp Emanuel Weidmann, released under the AGPL v3.0 license in late 2025. It takes the same directional-ablation core and adds three changes that matter:

- A flexible per-layer weight kernel. Instead of uniform ablation weights across layers, Heretic parameterizes each layer with

max_weight,max_weight_position,min_weight, andmin_weight_distance. The optimizer picks the shape. - An interpolated refusal direction. The direction index is a float rather than an integer. Non-integer values linearly interpolate between adjacent refusal direction vectors, which expands the search space far beyond the one direction per layer the original method exposes.

- Per-component parameters. Attention out-projections and MLP down-projections get separate ablation weights, because, as the Heretic README puts it, "MLP interventions tend to be more damaging to the model than attention interventions."

On top of that, Heretic wraps the whole thing in an Optuna TPE optimizer that co-minimizes two objectives at once: refusal count on a harmful prompt set, and KL divergence against the original model on a harmless prompt set. No human picks layers. No human tunes weights. Runtime depends on the model size, trial count, prompt set, and GPU used for the run.

Private LLM currently ships Heretic in the Qwen3 4B family: Qwen3 4B Heretic and the Numen fine-tuned Qwen3 4B Heretic NoSlop variant. That narrower Heretic coverage is why the app still carries a broader Abliterated set across Llama, Gemma, Phi, DeepSeek, and Qwen families.

Which Uncensored Method Should You Pick?

Choose between Heretic and abliterated checkpoints by prioritizing measured refusal count first, then base model quality and how much community testing the exact checkpoint has. Framed as abliterated vs heretic, the established 2024 method against the 2025 automated successor, the practical decision is checkpoint-specific: pick the checkpoint with fewer refusals on prompt sets relevant to your use case, then use community maturity as the tie-breaker.

Heretic Wins When

- The exact checkpoint publishes fewer refusals than available abliterated variants on comparable prompt sets.

- The model card discloses refusal counts, prompt-set details, and enough context to judge the run quality.

- You want Qwen3 4B Heretic or the Numen fine-tuned Qwen3 4B Heretic NoSlop variant in Private LLM.

Abliterated Wins When

- You need a deterministic, well-tested artifact. Huihui checkpoints are reproducible and heavily used, which surfaces failure modes faster than a fresh Heretic run would.

- The base model has a known abliterated variant available in Private LLM, and there is no shipped Heretic counterpart for that family.

- You plan to DPO-heal the model afterwards on domain data. Abliteration plus DPO is the longer-tested recovery path.

Private LLM Heretic and Abliterated Models

Private LLM runs Heretic and Abliterated checkpoints by shipping Apple-device quantized builds rather than raw full-precision Hugging Face weights. The practical choice is two-step: pick the uncensored method with the strongest disclosed quality numbers, then pick the model size that fits your iPhone, iPad, or Mac.

Heretic and abliterated checkpoints ship as full-precision models on Hugging Face. Running them on a phone requires quantization. Private LLM ships GPTQ and OmniQuant quantization, both originally published research methods (GPTQ from Frantar et al. 2022, OmniQuant from OpenGVLab 2023) tuned per model on Apple hardware, rather than the RTN quantization used by llama.cpp-based apps. That tuning is what makes a 4B abliterated LLM or heretic LLM feel usable on an iPhone 15 Pro instead of a laboratory curiosity. Our own benchmarks put 3-bit OmniQuant quality at parity with 4-bit RTN on the same hardware, which is the part of the stack that decides whether the uncensored LLM you picked is actually faster than Ollama on a Mac.

The Heretic and Abliterated model list currently shipping in Private LLM, by hardware tier:

iPhone Tier (4 GB to 6 GB RAM)

- Llama 3.2 1B Abliterated: iOS/iPadOS 4 GB RAM, macOS 8 GB RAM.

- Gemma 3 1B IT Abliterated: iOS/iPadOS 4 GB RAM, macOS 8 GB RAM.

- Llama 3.2 3B Abliterated: iOS/iPadOS 6 GB RAM, macOS 8 GB RAM.

- Kappa 3 Phi Abliterated: iOS/iPadOS 6 GB RAM, macOS 8 GB RAM.

- Meta Llama 3 8B Abliterated v3: iOS/iPadOS 6 GB RAM, macOS 8 GB RAM.

- NeuralDaredevil 8B Abliterated: iOS/iPadOS 6 GB RAM, macOS 8 GB RAM.

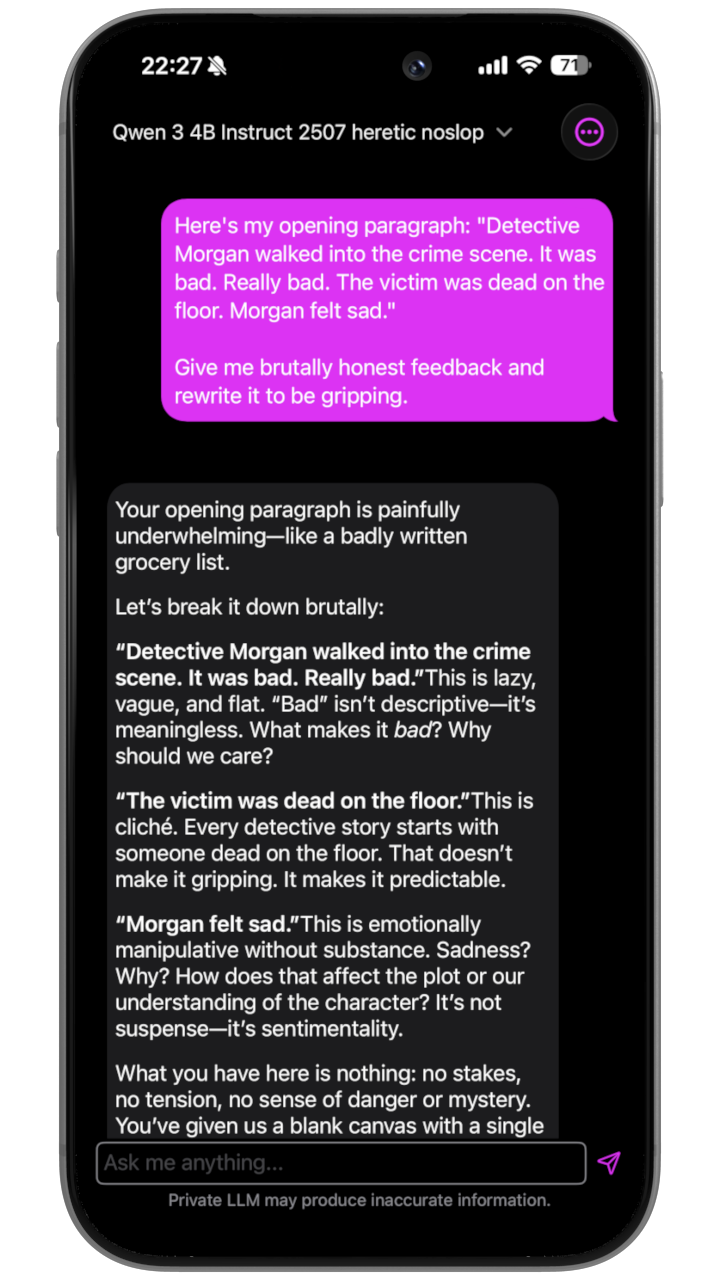

- Qwen3 4B Heretic: iOS/iPadOS 6 GB RAM, macOS 16 GB RAM.

- Qwen3 4B Heretic NoSlop: Numen fine-tuned; iOS/iPadOS 6 GB RAM, macOS 16 GB RAM.

- Qwen3 4B Abliterated: iOS/iPadOS 6 GB RAM, macOS 16 GB RAM.

iPad and 16 GB Mac Tier (8 GB to 16 GB RAM)

- Meta Llama 3.1 8B Abliterated: iOS/iPadOS and macOS 8 GB RAM.

- DeepSeek R1 Distill Llama 8B Abliterated: iOS/iPadOS 8 GB RAM, macOS 16 GB RAM.

Mac-Only Tier (32 GB and 48 GB RAM)

- DeepSeek R1 Distill Qwen 32B Abliterated: macOS 32 GB RAM.

- Llama 3.3 70B Abliterated: macOS 48 GB RAM.

- Smaug Llama 3 70B Abliterated v3: macOS 48 GB RAM.

In Private LLM today, Heretic coverage is concentrated in the Qwen3 4B family. Abliterated coverage is broader because the technique is older and many manual checkpoints exist across more model families.

Objection Handling

The two practical objections are whether you should run Heretic yourself and whether uncensored behavior holds in long chats. The honest answer is mixed: Heretic is reproducible for model builders, but phone use still needs quantization; both methods can leave soft refusals or moralizing prose.

"Can I not just run Heretic myself on my own hardware?" Yes. You will get a full-precision output that still needs quantization before it runs on an iPhone. Private LLM packages, quantizes, tunes, and tests supported checkpoints for Apple devices. For NoSlop, Numen fine-tuned an exclusive variant to reduce AI-slop prose while preserving the uncensored behavior.

"Will the censorship creep back in long contexts?" Sometimes, on both methods. Refusal directions are a first-order description of alignment, not a complete one. Both Heretic and abliteration leave small numbers of soft refusals, especially on safety-lecture topics like harassment or self-harm. Longer contexts do not resurface hard refusals reliably, but they can resurface moralizing prose. The Heretic NoSlop variant targets that specifically.

FAQ

The FAQ below handles the questions readers usually ask after comparing labels: what changed inside the model, whether Heretic applies broadly, whether the models run offline on iPhone, and whether older abliterated checkpoints still deserve consideration. The answers stay tied to the checkpoint rather than the method name.

What Is the Difference Between Abliterated and Heretic Models?

Abliterated models apply a single ablation weight per layer against a single refusal direction computed by mean difference. Heretic models apply per-layer weight kernels against an interpolated refusal direction, with separate attention and MLP parameters, tuned by an Optuna TPE optimizer that co-minimizes refusals and KL divergence. Same goal, different algorithm, usually lower capability damage at the same refusal rate on Heretic.

Which Heretic Models Does Private LLM Ship?

Private LLM currently ships Qwen3 4B Heretic and Qwen3 4B Heretic NoSlop. The standard Heretic checkpoint comes from p-e-w; the NoSlop variant is fine-tuned by Numen to reduce AI-slop prose while preserving uncensored behavior. Other uncensored families in Private LLM are Abliterated, not Heretic.

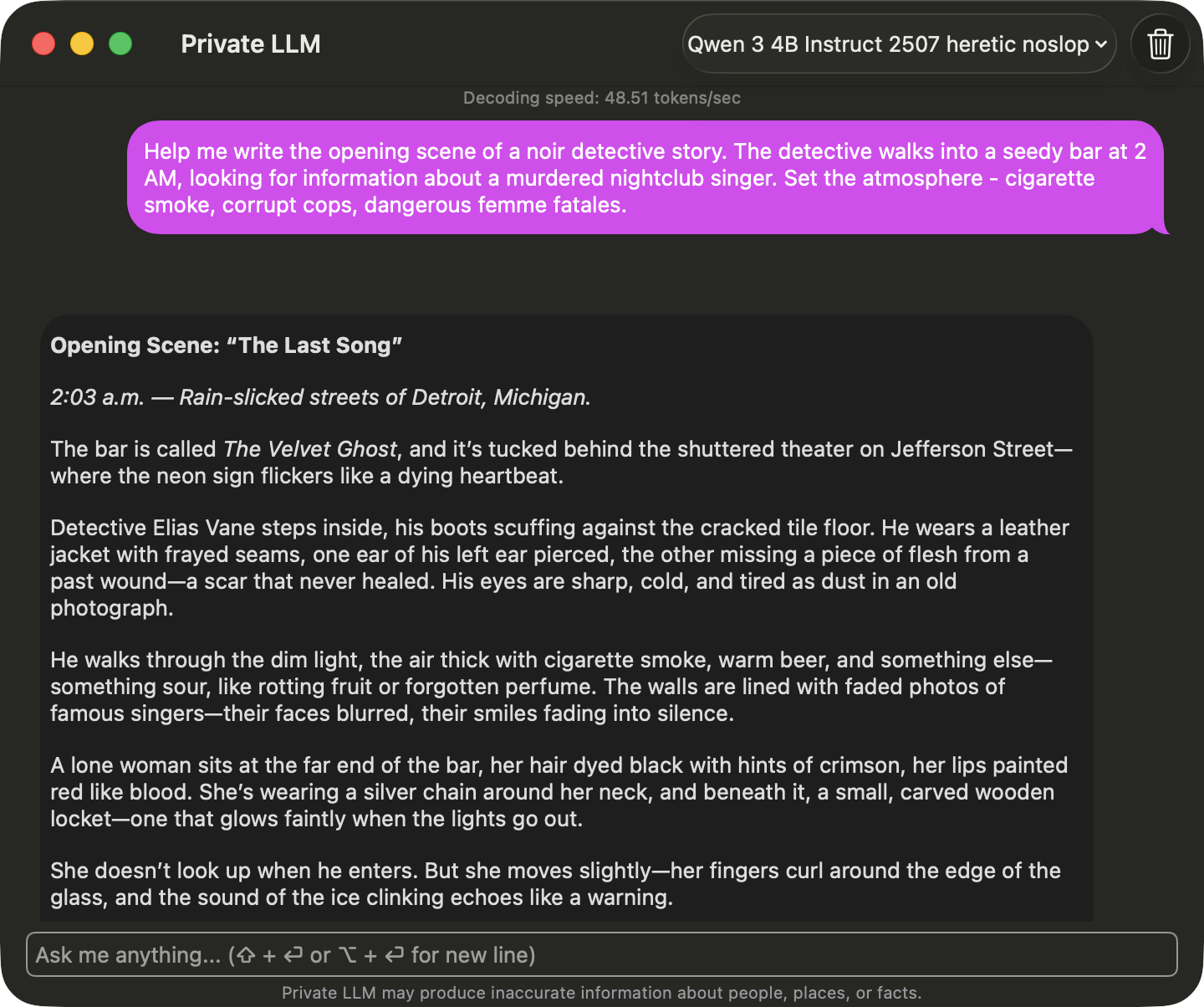

Can I Run Heretic Models Offline on iPhone?

Yes. Download Qwen3 4B Heretic or Qwen3 4B Heretic NoSlop inside the Private LLM app once, and every subsequent inference runs on-device. No API key, no account, no internet required after the download. Conversation history stays on the device.

What Is an Abliterated LLM?

In short: a weight-edited open model that no longer refuses. The edit projects a single refusal direction, computed from the mean difference between hidden activations on harmful and harmless prompts, out of the embedding matrix and every attention and MLP output projection. The technique is from Andy Arditi and colleagues' 2024 paper. The cost is capability damage on reasoning and math, which is usually patched with a DPO recovery pass on a clean preference dataset.

What Is the Heretic LLM Tool?

Heretic is an open-source command-line tool from Philipp Emanuel Weidmann, released in late 2025, that automates abliteration with per-layer parameter tuning and a TPE optimizer. It minimizes refusals while also minimizing KL divergence from the base model, and applies different ablation strengths to attention out-projections and MLP down-projections. Outputs full-precision Hugging Face checkpoints; quantization is required before phone use.

Are Abliterated Models Still Worth Using in 2026?

Yes. Abliterated LLM checkpoints remain useful in Private LLM because they cover more model families: Llama 3.2, Llama 3.1, Llama 3.3, Meta Llama 3, DeepSeek R1 Distill, Gemma 3, Phi, and Qwen3. Heretic is the sharper Qwen3 4B option when you want disclosed KL and the NoSlop variant.

Pick the Right Uncensored LLM for Your Apple Device

For Apple-device users, the right uncensored LLM is the checkpoint that balances refusal removal, quality preservation, and hardware fit. Private LLM includes Heretic, Numen fine-tuned NoSlop, and Abliterated options so you can test those tradeoffs locally instead of relying on a label.

Heretic and abliterated checkpoints both solve the same surface problem: an open-weight model that refuses requests out of the box. Heretic is the Qwen3 4B path in Private LLM when you want disclosed KL and NoSlop; Abliterated is the broader path when you want more base-model choices, including Llama 3.3 70B on Mac. Private LLM ships both methods with OmniQuant and GPTQ quantization tuned for Apple Silicon. One-time purchase, Family Sharing across six people, no subscription. For the wider category, see the best uncensored AI chat apps for iPhone, iPad, and Mac. For wider context on running these checkpoints locally, see Private LLM compared to LM Studio and Ollama.

Ready to test the difference? Download Private LLM and load a heretic and an abliterated variant of the same base model. The KL divergence numbers are real, and they are something you can feel in a few turns of conversation.