Private LLM Private, Uncensored AI Chat for iPhone, iPad, and Mac

No Cloud, No Tracking, No Logins.

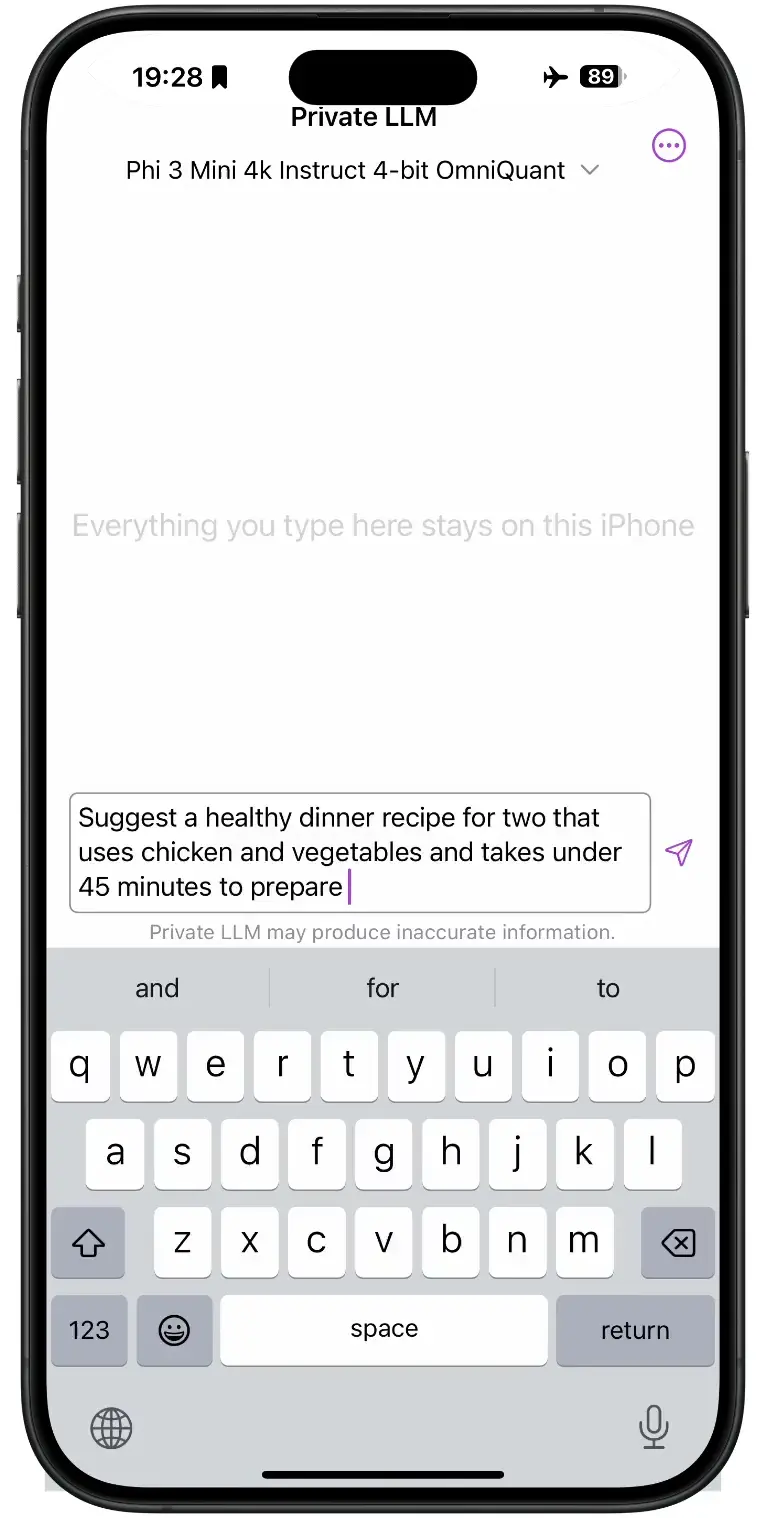

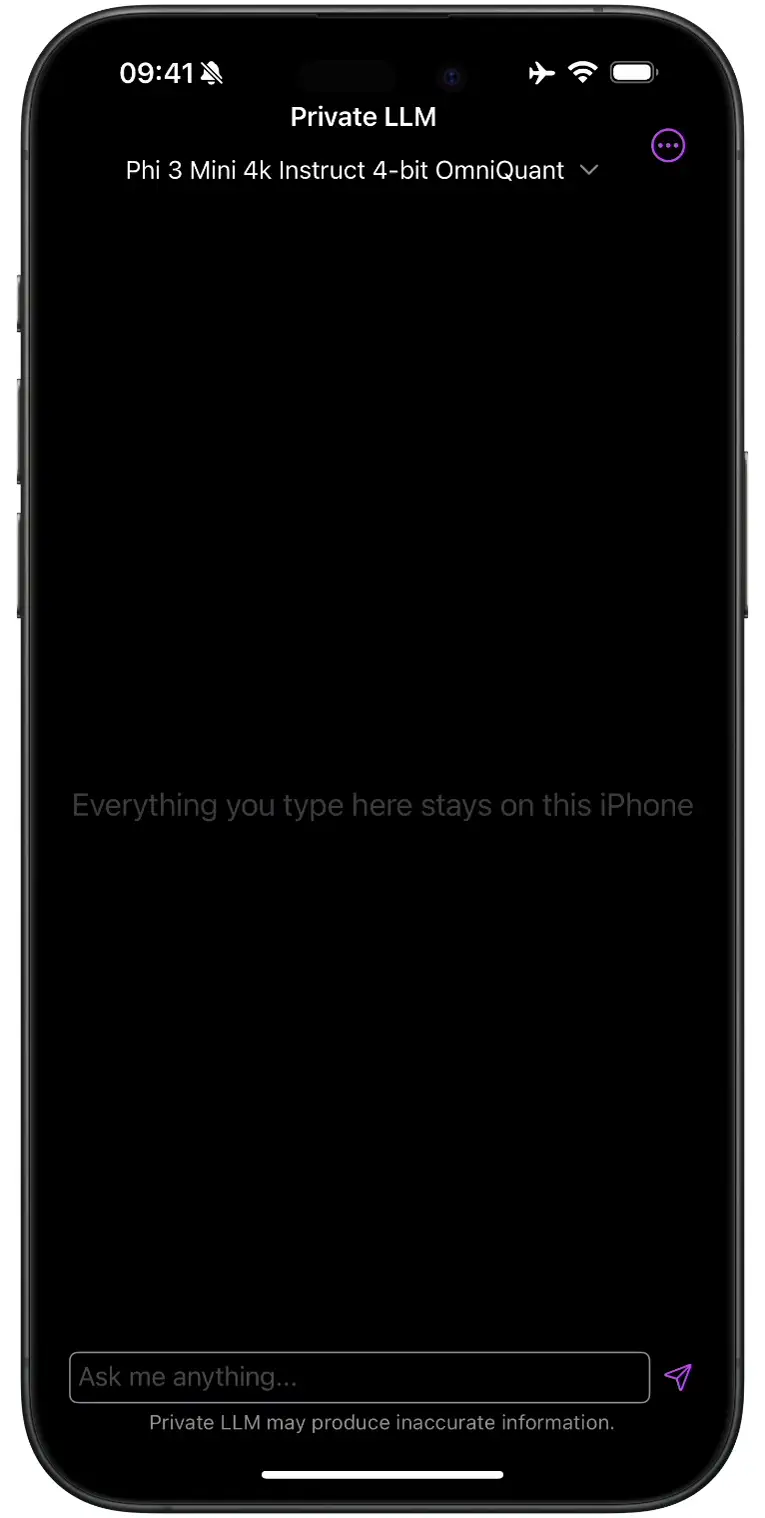

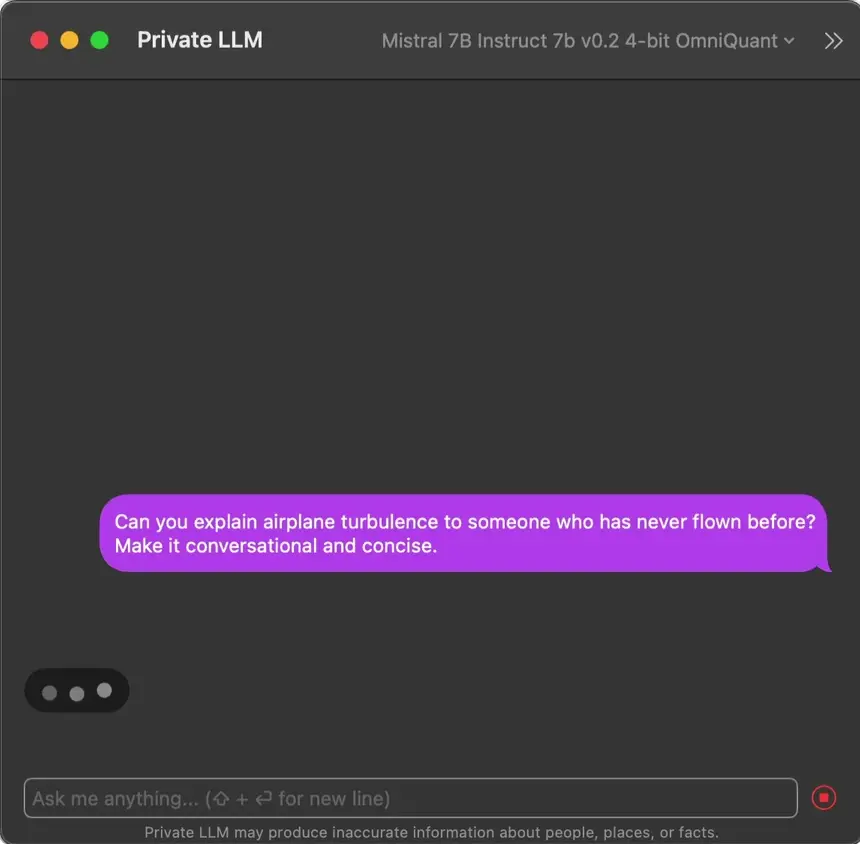

Run AI Offline on Your iPhone, iPad, and Mac

Private LLM runs entirely on your iPhone, iPad, or Mac. Your conversations never leave the device, and no internet is required after the first model download. No account, no tracking, no logs. One purchase unlocks the app across every Apple device you own and your Family Sharing group.

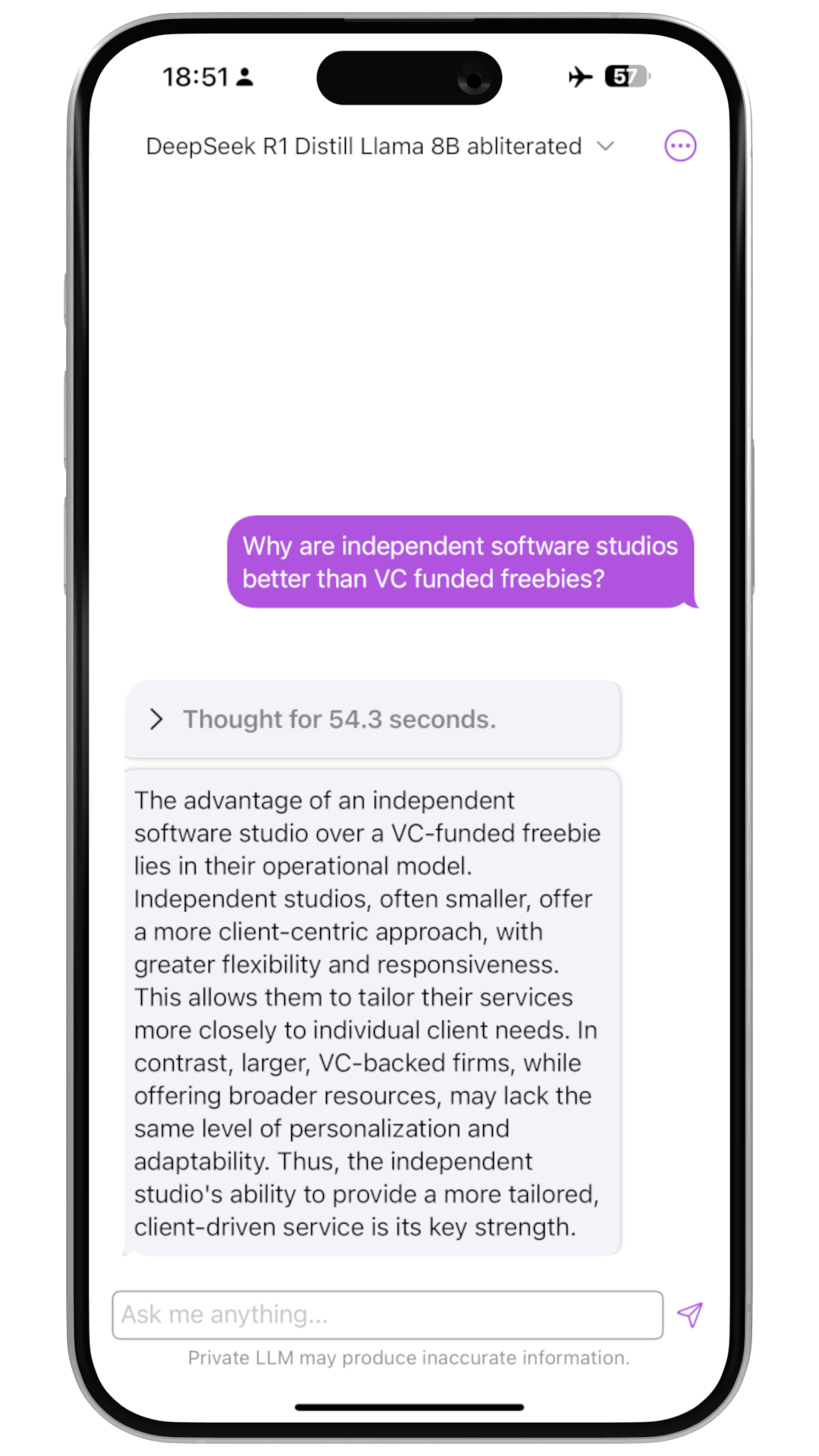

Run DeepSeek R1, Llama 3.3, Qwen3, and Gemma 3 Locally

Private LLM runs the leading open-source models directly on your Apple devices — DeepSeek R1 Distill, Llama 3.3 70B, Qwen3 4B, Phi 4, Google Gemma 3, and more. Every conversation stays on-device, and every model is quantized in-house for the best possible quality on your hardware.

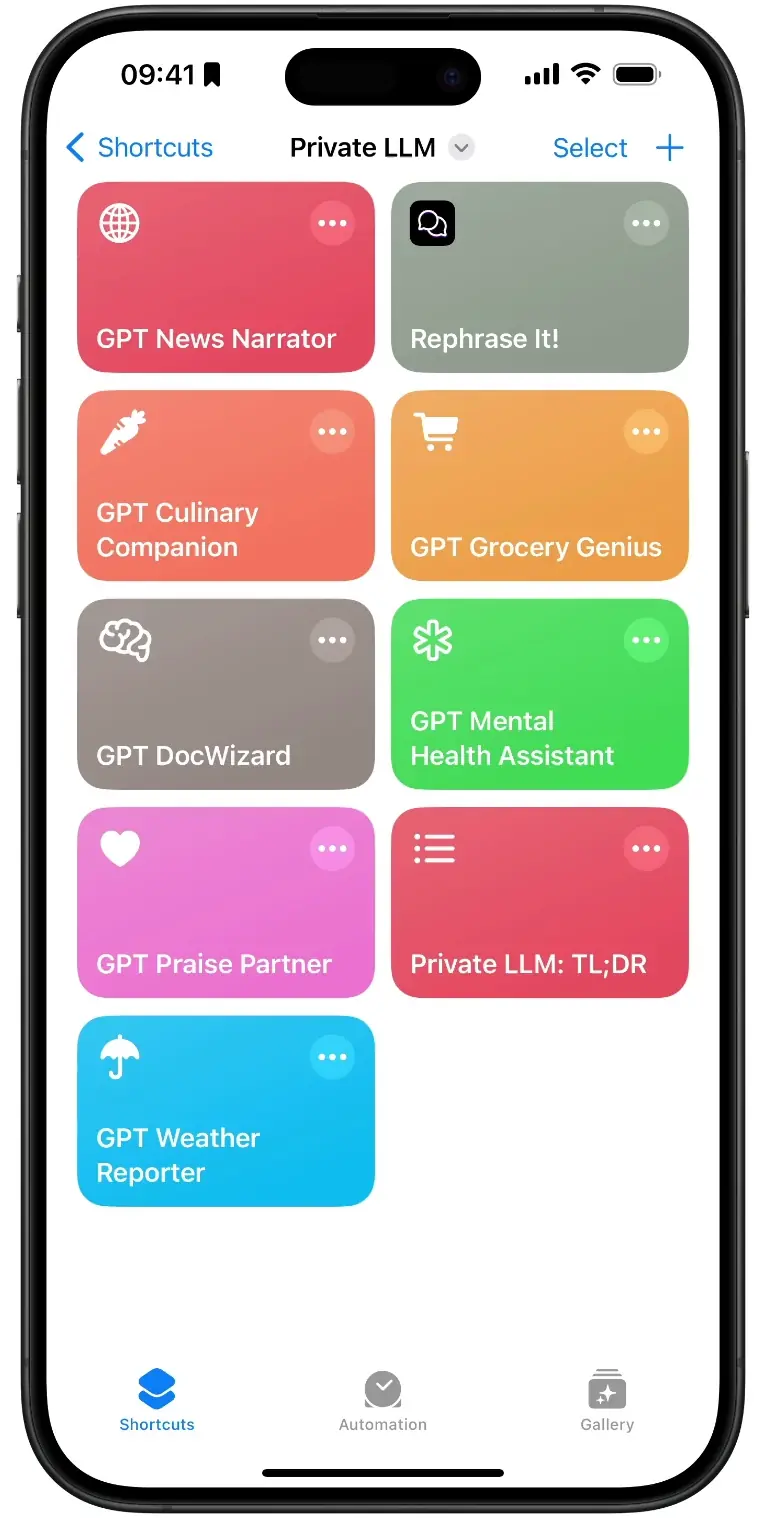

Local AI in Siri and Apple Shortcuts — No Code

Private LLM plugs directly into Siri and the Shortcuts app. Build AI-driven workflows that summarise text, generate writing, or pipe responses into any of the 70+ apps that support the x-callback-url specification. No code required.

One Purchase, No Subscription — Family Sharing for Six

Ditch the subscriptions for a smarter choice with Private LLM. A single purchase unlocks the app across all Apple platforms—iPhone, iPad, and Mac—while enabling Family Sharing for up to six relatives. This approach not only simplifies access but also amplifies the value of your investment, making digital privacy and intelligence universally available in your family.

AI Writing Tools Built Into macOS

Select any text in any macOS app, right-click, and Private LLM rewrites, summarises, or corrects it — entirely on-device. Supports English and major Western European languages.

Built by Two Engineers, Not VCs

Private LLM is built by two engineers in the EU — bootstrapped, no VC funding, no growth-hacking roadmap. We are the only app on the App Store with OmniQuant and GPTQ quantization, which produce measurably better output than the RTN quantization used by MLX and llama.cpp wrapper apps like Ollama and LM Studio. We answer to users, not investors — which is why your data stays on-device and always will.

From the App Store

Real reviews from iPhone and Mac users

“This is a private AI app created by developers performing constant updates and not charging a subscription. That is rare nowadays! Bravo, looking forward to the updates as this continues to improve!”

Review 1 of 5

OmniQuant and GPTQ Quantization: Better Output, Less Memory

Private LLM uses OmniQuant and GPTQ quantization. When LLMs are quantized for on-device inference, outlier weight values hurt text generation quality. OmniQuant modulates outlier weights with a learnable, optimization-based clipping mechanism that minimizes quantization error. GPTQ uses approximate second-order (Hessian) information to minimize reconstruction error on the weights that matter most. The affine RTN quantization used by MLX-based apps like LM Studio, and the block-wise RTN variants used by llama.cpp-based apps like Ollama, skip this kind of per-weight optimization — which is why those apps produce lower-quality output on the same Apple hardware. We constantly explore advanced quantization methods, work that wrapper apps built on third-party inference engines cannot take on. OmniQuant and GPTQ paired with optimized model-specific Metal kernels let Private LLM deliver text generation that is both fast and high-quality on Apple hardware.

Download the Best Open Source LLMs

iOS

Qwen3 4B Based Models

For iPhones/iPads with 6GB+ RAMDeepSeek R1 Distill Based Models

For iPhones/iPads with 8GB+ RAMDeepSeek R1 Distill Based Models

For iPhones/iPads with 16GB+ RAMMeta Llama 3.2 3B Based Models

For iPhones/iPads with 6GB+ RAMMeta Llama 3.2 1B Based Models

For iPhones/iPads with 4GB+ RAMGoogle Gemma 3 1B Based Models

For iPhones/iPads with 4GB+ RAMGoogle Gemma 2 9B Based Models

For iPhones/iPads with 16GB+ RAMGoogle Gemma 2 2B Based Models

For iPhones/iPads with 4GB+ RAMMeta Llama 3.1 8B Based Models

For iPhones/iPads with 8GB+ RAMMeta Llama 3 8B Based Models

For iPhones/iPads with 6GB+ RAMQwen 2.5 Based Models

For iPhones/iPads with 4GB+ RAMQwen 2.5 Based Models

For iPhones/iPads with 8GB+ RAMQwen 2.5 Based Models

For iPhones/iPads with 8GB+ RAMQwen 2.5 14B Based Models

For iPhones/iPads with 16GB+ RAMPhi-3 Mini 3.8B Based Models

For iPhones/iPads with 6GB+ RAMMistral 7B Based Models

For iPhones/iPads with 6GB+ RAMPhi-2 3B Based Models

For iPhones/iPads with 4GB+ RAMH2O Danube Based Models

For iPhones/iPads with 4GB+ RAMStableLM 3B Based Models

For iPhones/iPads with 4GB+ RAMTinyLlama 1.1B Based Models

For iPhones/iPads with 4GB+ RAMYi 6B Based Models

For iPhones/iPads with 6GB+ RAMmacOS

DeepSeek R1 Distill Based Models

For Apple Silicon Macs with 16GB+ RAMDeepSeek R1 Distill Based Models

For Apple Silicon Macs with 32GB+ RAMDeepSeek R1 Distill Based Models

For Apple Silicon Macs with 48GB+ RAMGoogle Gemma 3 1B Based Models

For Apple Silicon Macs with 8GB+ RAMPhi-4 14B Based Models

For Apple Silicon Macs with 16GB+ RAMMeta Llama 3.3 70B Based Models

For Apple Silicon Macs with 48GB+ RAMMeta Llama 3.2 3B Based Models

For Apple Silicon Macs with 8GB+ RAMMeta Llama 3.2 1B Based Models

For Apple Silicon Macs with 8GB+ RAMMeta Llama 3.1 70B Based Models

For Apple Silicon Macs with 64GB+ RAMMeta Llama 3.1 8B Based Models

For Apple Silicon Macs with 8GB+ RAMQwen 2.5 Based Models

For Apple Silicon Macs with 8GB+ RAMQwen 2.5 14B Based Models

For Apple Silicon Macs with 16GB+ RAMQwen3 4B Based Models

For Apple Silicon Macs with 16GB+ RAMQwen 2.5 32B Based Models

For Apple Silicon Macs with 24GB+ RAMGoogle Gemma 2 9B Based Models

For Apple Silicon Macs with 16GB+ RAMGoogle Gemma 2 2B Based Models

For Apple Silicon Macs with 8GB+ RAMMeta Llama 3 70B Based Models

For Apple Silicon Macs with 48GB+ RAMMeta Llama 3 8B Based Models

For Apple Silicon Macs with 8GB+ RAMPhi-3 Mini 3.8B Based Models

For Apple Silicon Macs with 8GB+ RAMMixtral 8x7B Based Models

For Apple Silicon Macs with 32GB+ RAMLlama 33B Based Models

For Apple Silicon Macs with 24GB+ RAMLlama 2 13B Based Models

For Apple Silicon Macs with 16GB+ RAMCodeLlama 13B Based Models

For Apple Silicon Macs with 16GB+ RAMLlama 2 7B Based Models

For Apple Silicon Macs with 8GB+ RAMSolar 10.7B Based Models

For Apple Silicon Macs with 16GB+ RAMPhi-2 3B Based Models

For Apple Silicon Macs with 8GB+ RAMMistral 7B Based Models

For Apple Silicon Macs with 8GB+ RAMStableLM 3B Based Models

For Apple Silicon Macs with 8GB+ RAMYi 6B Based Models

For Apple Silicon Macs with 8GB+ RAMYi 34B Based Models

For Apple Silicon Macs with 24GB+ RAMHow Can We Help?

Whether you've got a question or you're facing an issue with Private LLM, we're here to help you out. Just drop your details in the form below, and we'll get back to you as soon as we can.